The launch of ChatGPT-4 by OpenAI caused a significant stir, as the latest AI language model is a notable improvement over its predecessor and boasts enhanced capabilities. Where GPT-4 claims to process images, but ChatGPT 4 refuses to look at pictures.

If you have prior experience with ChatGPT models, you may have noticed their limited capacity only to process textual input. However, the latest and most significant feature of the new GPT-4 model is its multimodal capability. This implies that GPT-4 can now accept prompts comprising both text and images.

According to OpenAI, the new generation relies on a “large multimodal model” that is capable of accepting longer text inputs of up to 25,000 words, which is a significant increase from the previous limit of 4,000 words. This model is designed to be safer and more factual. However, ChatGPT 4 refuses to look at pictures from its users.

In This Article

ChatGPT 4 Refuses to Look at Pictures

Although OpenAI has not yet released the image description feature to the general public, users are eagerly anticipating its launch. Recently, Microsoft released the Bing AI chatbot that uses GPT-4, giving the public a glimpse of this tool. Furthermore, it can analyze and describe photos also.

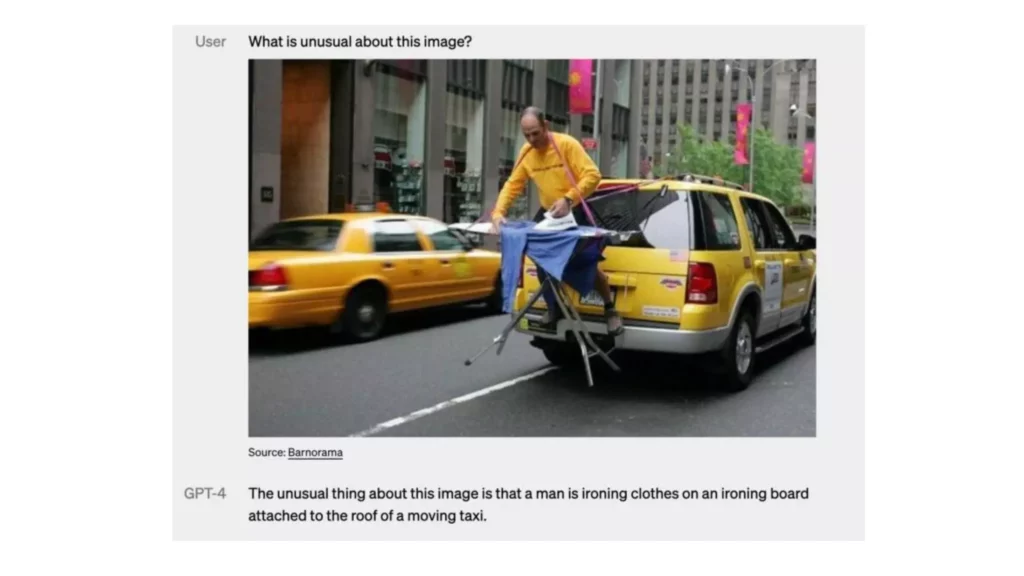

The ChatGPT-4 demo held by OpenAI demonstrated all the latest features that are expected from the latest version of ChatGPT. The AI’s ability to interpret and comprehend images goes beyond simply receiving them. This understanding can be applied to prompts that incorporate both text and visual inputs.

GPT-4’s multimodal capacity will be applicable to all forms of text and images, including documents with text and photos, hand-drawn or sketched diagrams, and screenshots. The output of GPT-4 will remain as competitive as it would be with text-only inputs.

How Will ChatGPT-4 Process Images?

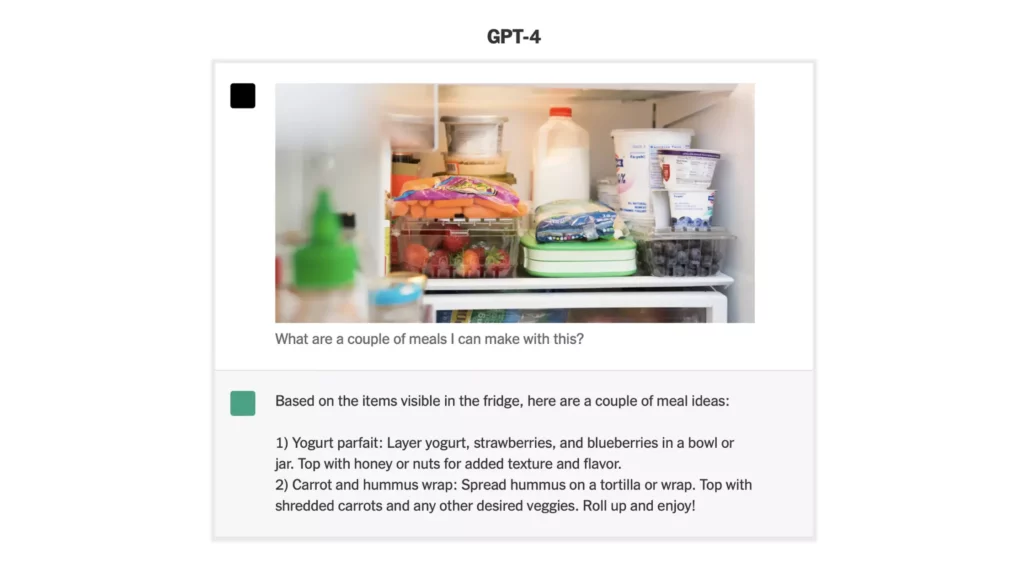

According to OpenAI, GPT-4 has the ability to handle both text and image prompts, allowing the user to specify any language or vision task in a manner similar to the text-only setting. It can produce various text outputs, such as natural language and code, based on inputs containing both text and images. GPT-4 displays similar capabilities in a variety of fields, including documents with text and photos, diagrams, and screenshots.

Additionally, It is possible to improve its performance by utilizing test-time methodologies that were initially developed for language models that solely deal with text, such as chain-of-thought and few-shot prompting. However, the use of image inputs is still in the experimental stage and not yet accessible to the public.

As an example put by OpenAI on its site, it uploaded the below-shown image to Gpt-4 and asked it to describe it panel by panel. The GPT-4 as described in this form:

The picture depicts packaging for an adapter called “Lightning Cable,” featuring three panels:

Panel 1: A mobile device is shown with a VGA connector, which is a 15-pin, big, blue plug that is typically employed for computer displays, inserted into its charging port.

Panel 2: The packaging of the “Lightning Cable” adapter features an image of a VGA connector

Panel 3:The VGA connector is shown in close-up, with a small Lightning connector attached to its end, which is utilized for charging Apple devices like iPhones.

The image’s comedic effect stems from the ludicrousness of plugging an outmoded and sizable VGA connector into a petite, contemporary smartphone charging port.

The sophistication in the answer provided by ChatGPT-4 can be used to imagine how far this technology is going to take image processing and understanding.

Wrapping Up

The latest iteration of OpenAI’s language model, GPT-4, has made significant strides in its image processing capabilities, allowing it to comprehend and analyze images alongside text inputs. This new multimodal feature has opened up a range of possibilities for AI in various domains, including medical billing and legal defense, where GPT-4 can automatically identify errors and compare prices across various institutions.

Additionally, While image inputs remain a research preview and not yet publicly available, the potential of this new technology is promising, and its eventual release could revolutionize the field of AI and its applications in various industries.

Hope this article helped to know why ChatGPT 4 refuses to look at pictures from its users.

Frequently Asked Questions

Does ChatGPT-4 have a Video processing feature?

No, there is no such confirmation that ChatGPT-4 has a video processing feature.

What is the Character limit of ChatGPT 4?

According to OpenAI, ChatGPT 4 can process up to 25000 words at a time.

When will the Image Prompts feature of ChatGPT 4 be available to the Users?

As of now, it has not been specified by OpenAI when exactly the image feature of ChatGPT 4 will be available to the users.